A comparison of Cohen's Kappa and Gwet's AC1 when calculating inter-rater reliability coefficients: a study conducted with personality disorder samples | springermedizin.de

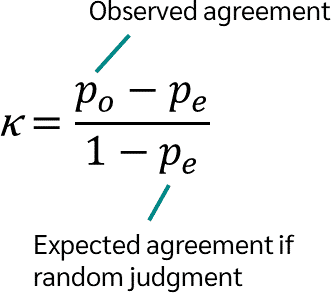

Cohen's Kappa: What it is, when to use it, and how to avoid its pitfalls | by Rosaria Silipo | Towards Data Science

Top: Kappa values with and without data balancing sorted by decreasing... | Download Scientific Diagram

Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar

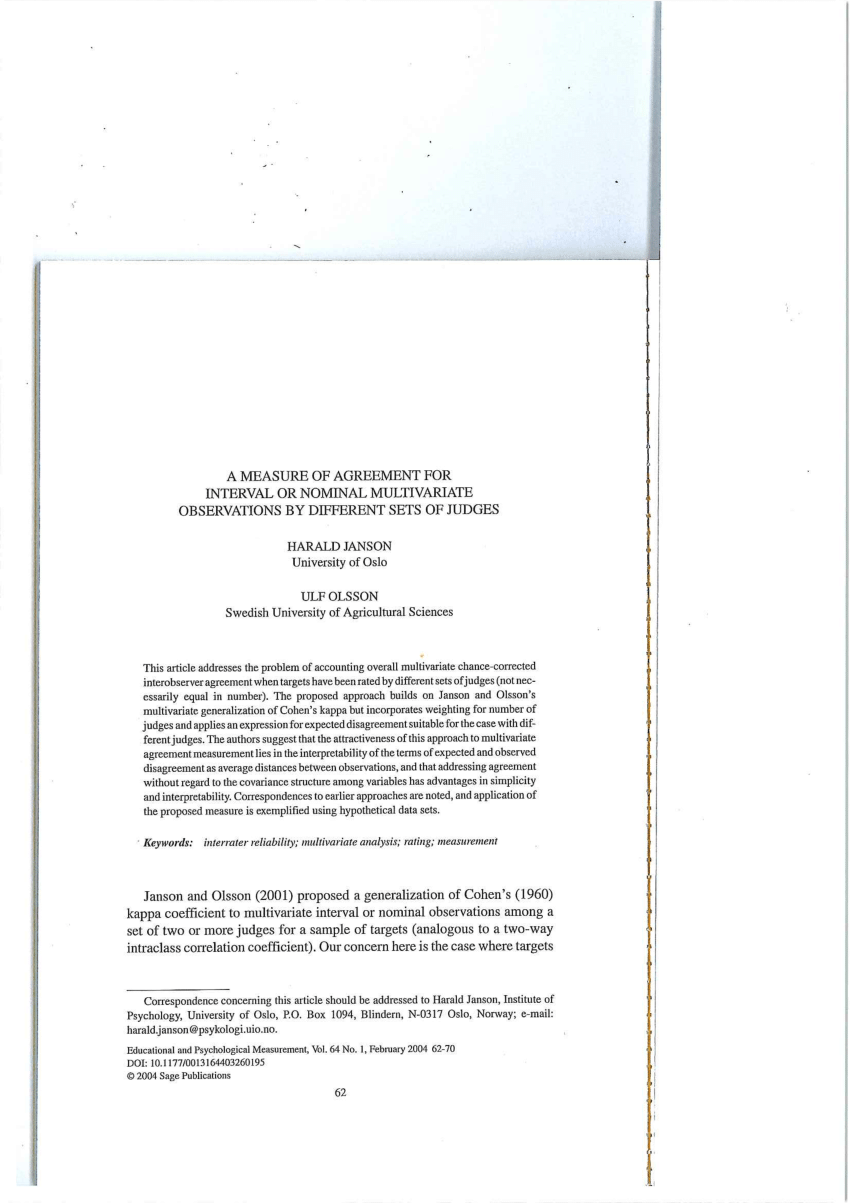

PDF) A Measure of Agreement for Interval or Nominal Multivariate Observations by Different Sets of Judges

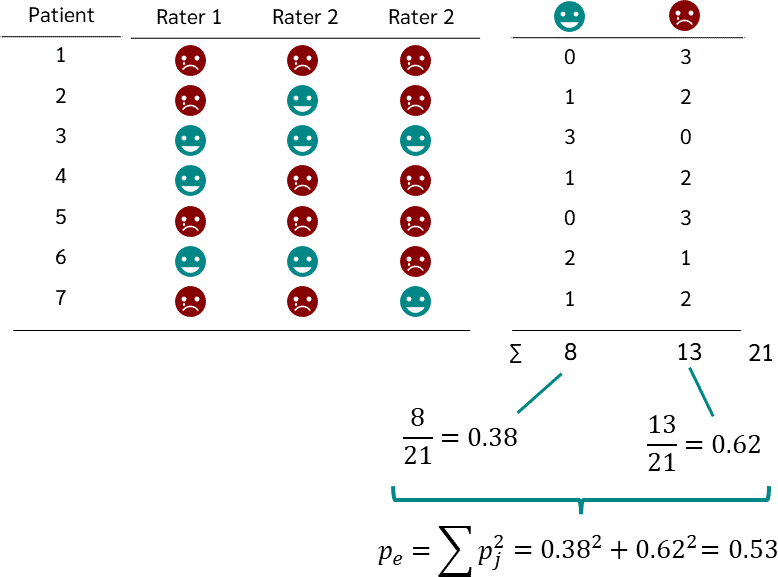

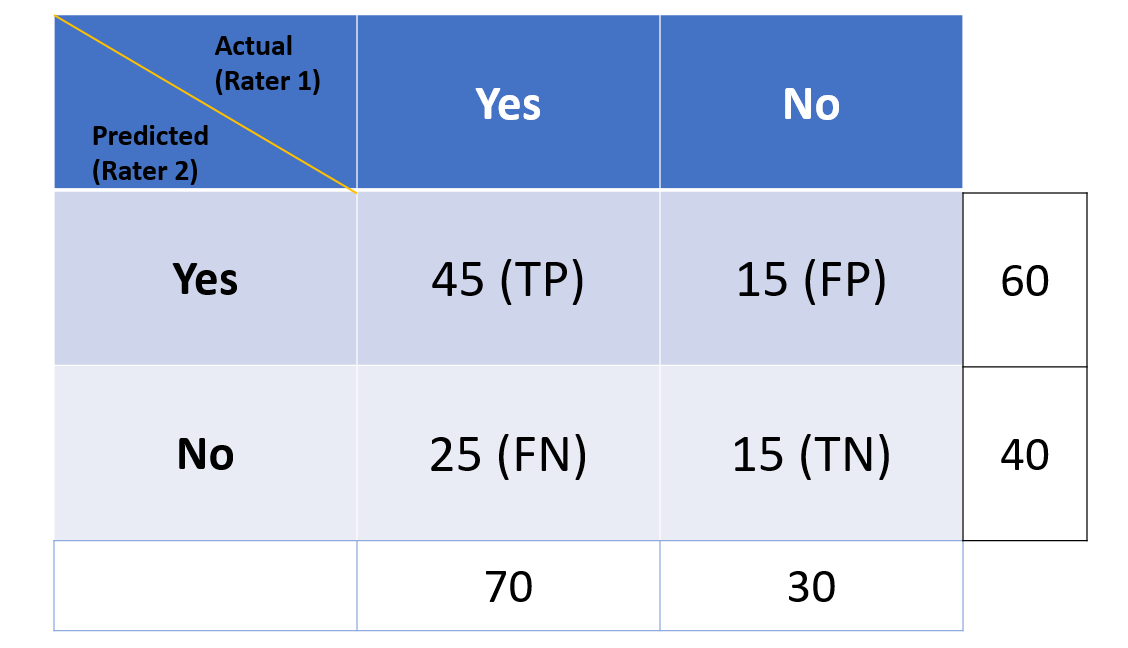

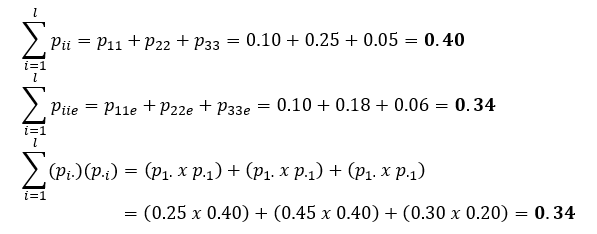

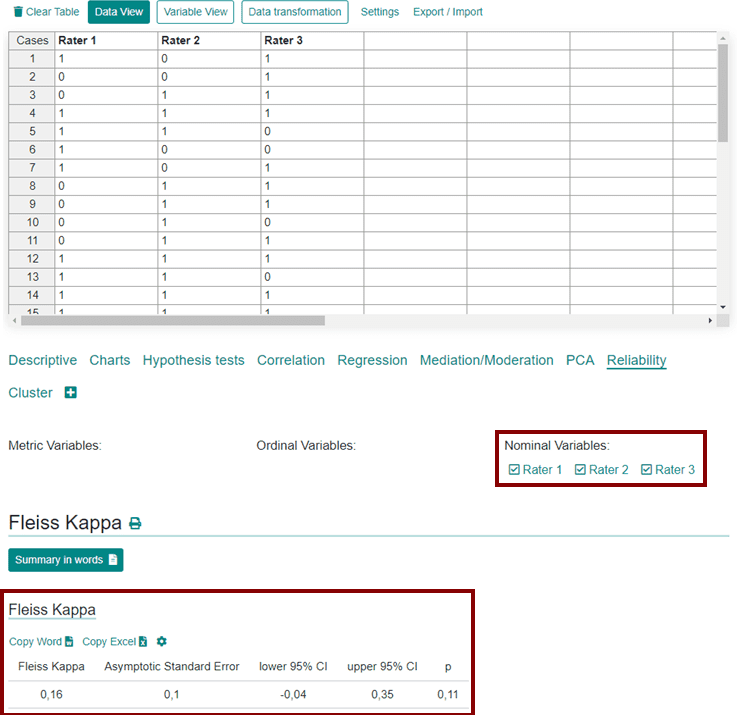

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

![PDF] The Reliability of Dichotomous Judgments: Unequal Numbers of Judges per Subject | Semantic Scholar PDF] The Reliability of Dichotomous Judgments: Unequal Numbers of Judges per Subject | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/d03b63208d0cfd7f060ca7dcb872f2e2631febd2/5-Table1-1.png)

PDF] The Reliability of Dichotomous Judgments: Unequal Numbers of Judges per Subject | Semantic Scholar

A Measure of Agreement for Interval or Nominal Multivariate Observations by Different Sets of Judges | Semantic Scholar

Systematic literature reviews in software engineering—enhancement of the study selection process using Cohen's Kappa statistic - ScienceDirect

Fleiss Kappa coefficients with their 95% confidence intervals. The red... | Download Scientific Diagram

Cohen's Kappa vs Fleiss Kappa - We ask and you answer! The best answer wins! - Benchmark Six Sigma Forum

![Fleiss Kappa [Simply Explained] - YouTube Fleiss Kappa [Simply Explained] - YouTube](https://i.ytimg.com/vi/ga-bamq7Qcs/sddefault.jpg)

![Fleiss Kappa [Simply Explained] - YouTube Fleiss Kappa [Simply Explained] - YouTube](https://i.ytimg.com/vi/ga-bamq7Qcs/maxresdefault.jpg)